| Year |

Experimental Studies |

Link |

BibTex |

| | | |

| 2020 |

J.M. Cadenas, M.C. Garrido, R. Martínez-España, M.A. Guillén-Navarro. Making decisions for frost prediction in agricultural crops in a softcomputing framework. Computers and Electronics in Agriculture 175, 105587, 2020. |

doi |

|

Abstract [▲/▼]

Nowadays, there are many areas of daily life that can obtain benefit from technological advances and the large amounts of information stored. One of

these areas is agriculture, giving place to precision agriculture. Frosts in crops are among the problems that precision agriculture tries to solve

because produce great economic losses to farmers. The problem of early detection of frost is a process that involves a large amount of wheather data.

However, the use of these data, both for the classification and regression task, must be carried out in an adequate way to obtain an inference with

quality. A preprocessing of them is carried out in order to obtain a dataset grouping attributes that refer to the same measure in a single attribute

expressed by a fuzzy value. From these fuzzy time series data we must use techniques for data analysis that are capable of manipulating them. Therefore,

first a regression technique based on k-nearest neighbors in a Soft Computing framework is proposed that can deal with fuzzy data, and second, this technique

and others to classification are used for the early detection of a frost from data obtained from different weather stations in the Region of Murcia (south-east

Spain) with the aim of decrease the damages that these frosts can cause in crops. From the models obtained, an interpretation of the provided information is

performed and the most relevant set of attributes is obtained for the anticipated prediction of a frost and of the temperature value. Several experiments are

carried out on the datasets to obtain the models with the best performance in the prediction validating the results by means of a statistical analysis.

|

| |

| | | |

| 2018 |

J.M. Cadenas, M.C. Garrido, R. Martínez, E. Muñoz, P.P. Bonissone. A fuzzy K-nearest neighbor classifier to deal with imperfect data. Soft Computing 22, 3313-3330, 2018. |

doi |

|

Abstract [▲/▼]

The k-nearest neighbors method (kNN) is a nonparametric, instance-based method used for regression and classification. To classify a new instance,

the kNN method computes its k nearest neighbors and generates a class value from them. Usually, this method requires that the information available

in the datasets be precise and accurate, except for the existence of missing values. However, data imperfection is inevitable when dealing with real-world

scenarios. In this paper, we present the kNNimp classifier, a k-nearest neighbors method to perform classification from datasets with imperfect value. The

importance of each neighbor in the output decision is based on relative distance and its degree of imperfection. Furthermore, by using external parameters,

the classifier enables us to define the maximum allowed imperfection, and to decide if the final output could be derived solely from the greatest weight class

(the best class) or from the best class and a weighted combination of the closest classes to the best one. To test the proposed method, we performed several

experiments with both synthetic and real-world datasets with imperfect data. The results, validated through statistical tests, show that the kNNimp classifier

is robust when working with imperfect data and maintains a good performance when compared with other methods in the literature, applied to datasets with or

without imperfection.

|

| |

| | | |

| 2013 |

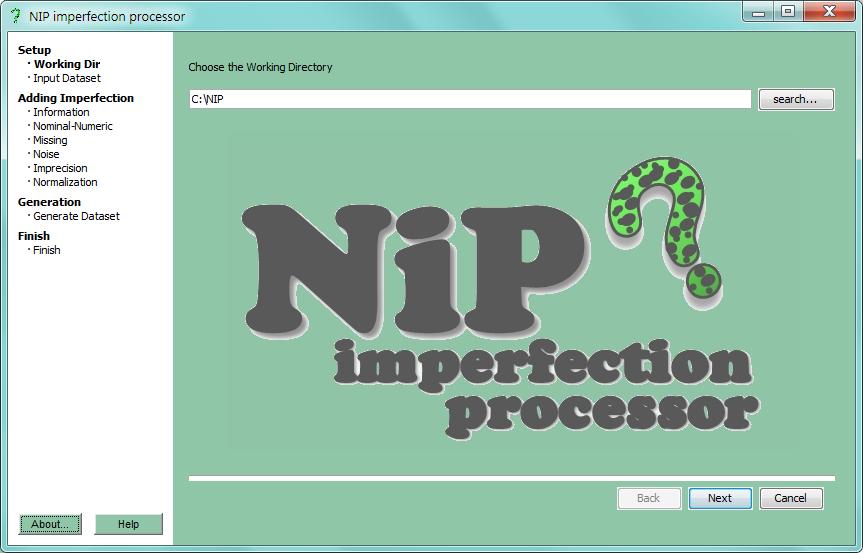

J.M. Cadenas, M.C. Garrido, R. Martínez. NIP - An Imperfection Processor to Data Mining datasets. International Journal of Computational Intelligence Systems 6 (supplement 1), 3-17, 2013. |

doi |

|

Abstract [▲/▼]

Every day there are more techniques that can work with low quality data. As a result, issues related to data quality have become more

crucial and have consumed a majority of the time and budget of data mining projects. One problem for researchers is the lack of low quality

data in order to test their techniques with this data type. Also, as far as we know, there is no software tool focused on the create/manage low

quality datasets which treats, in the widest possible way, the low quality data and helps us to create repositories with low quality datasets for

testing and comparison of data mining techniques and algorithms. For this reason, we present in this paper a software tool which can create/manage

low quality datasets. Among other things, the tool can transform a dataset by adding low quality data, removing and replacing any data,

constructing a fuzzy partition of the attributes, etc. It also allows different input/output formats of the dataset.

|

| |

| | | |

| 2013 |

J.M. Cadenas, M.C. Garrido, R. Martínez. Feature subset selection Filter-Wrapper based on low quality data. Expert Systems with Applications 40(16), 6241-6252, 2013. (Datasets used in this paper: Go to datasets) |

doi |

|

Abstract [▲/▼]

Today, feature selection is an active research in machine learning. The main idea of feature selection is to choose a subset of available features,

by eliminating features with little or no predictive information, as well as redundant features that are strongly correlated. There are a lot of approaches

for feature selection, but most of them can only work with crisp data. Until now there have not been many different approaches which can directly work

with both crisp and low quality (imprecise and uncertain) data. That is why, we propose a new method of feature selection which can handle both crisp and

low quality data. The proposed approach is based on a Fuzzy Random Forest and it integrates filter and wrapper methods into a sequential search procedure

with improved classification accuracy of the features selected. This approach consists of the following main steps: (1) scaling and discretization process

of the feature set; and feature pre-selection using the discretization process (filter); (2) ranking process of the feature pre-selection using the Fuzzy

Decision Trees of a Fuzzy Random Forest ensemble; and (3) wrapper feature selection using a Fuzzy Random Forest ensemble based on cross-validation. The

efficiency and effectiveness of this approach is proved through several experiments using both high dimensional and low quality datasets. The approach

shows a good performance (not only classification accuracy, but also with respect to the number of features selected) and good behavior both with high

dimensional datasets (microarray datasets) and with low quality datasets.

|

| |

| | | |

| 2012 |

J.M. Cadenas, M.C. Garrido, R. Martínez, P.P. Bonissone. Extending information processing in a Fuzzy Random Forest ensemble. Soft Computing 16(5), 845-861, 2012. (Datasets used in this paper: Go to datasets) |

doi |

|

Abstract [▲/▼]

Imperfect information inevitably appears in real situations for a variety of reasons. Although efforts have been made to incorporate imperfect

data into classification techniques, there are still many limitations as to the type of data, uncertainty, and imprecision that can be handled. In

this paper, we will present a Fuzzy Random Forest ensemble for classification and show its ability to handle imperfect data into the learning and

the classification phases. Then, we will describe the types of imperfect data it supports. We will devise an augmented ensemble that can operate

with others type of imperfect data: crisp, missing, probabilistic uncertainty, and imprecise (fuzzy and crisp) values. Additionally, we will perform

experiments with imperfect datasets created for this purpose and datasets used in other papers to show the advantage of being able to express the

true nature of imperfect information.

|

| |

| | | |

| 2012 |

J.M. Cadenas, M.C. Garrido, R. Martínez, P.P. Bonissone. OFP_CLASS: A hybrid method to generate optimized fuzzy partitions for classification. Soft Computing 16(4), 667-682, 2012. |

doi |

|

Abstract [▲/▼]

The discretization of values plays a critical role in data mining and knowledge discovery. The representation of information through intervals

is more concise and easier to understand at certain levels of knowledge than the representation by mean continuous values. In this paper, we

propose a method for discretizing continuous attributes by means of fuzzy sets, which constitute a fuzzy partition of the domains of these attributes.

This method carries out a fuzzy discretization of continuous attributes in two stages. A fuzzy decision tree is used in the first stage to propose

an initial set of crisp intervals, while a genetic algorithm is used in the second stage to define the membership functions and the cardinality of

the partitions. After defining the fuzzy partitions, we evaluate and compare them with previously existing ones in the literature.

|

| |

| | | |

| 2010 |

P.P. Bonissone, J.M. Cadenas, M.C. Garrido, R.A. Díaz-Valladares. A Fuzzy Random Forest. Int. Journal of Approximate Reasoning 51(7), 729-747, 2010. |

doi |

|

Abstract [▲/▼]

When individual classifiers are combined appropriately, a statistically significant increase in classification accuracy is usually obtained.

Multiple classifier systems are the result of combining several individual classifiers. Following Breiman's methodology, in this paper a multiple

classifier system based on a ''forest'' of fuzzy decision trees, i.e., a fuzzy random forest, is proposed. This approach combines the robustness

of multiple classifier systems, the power of the randomness to increase the diversity of the trees, and the flexibility of fuzzy logic and fuzzy

sets for imperfect data management. Various combination methods to obtain the final decision of the multiple classifier system are proposed and

compared. Some of them are weighted combination methods which make a weighting of the decisions of the different elements of the multiple classifier

system (leaves or trees). A comparative study with several datasets is made to show the efficiency of the proposed multiple classifier system and the

various combination methods. The proposed multiple classifier system exhibits a good accuracy classification, comparable to that of the best classifiers

when tested with conventional data sets. However, unlike other classifiers, the proposed classifier provides a similar accuracy when tested with imperfect

datasets (with missing and fuzzy values) and with datasets with noise.

|

| |

| | | |

| 2010 |

M.C. Garrido, J.M. Cadenas, P.P. Bonissone. A Classification and Regression Technique to handle Heterogeneous and Imperfect Information. Soft Computing 14(11), 1165-1185, 2010. |

doi |

|

Abstract [▲/▼]

Imperfect information inevitably appears in real situations for a variety of reasons. Although efforts have been made to incorporate imperfect

data into learning and inference methods, there are many limitations as to the type of data, uncertainty and imprecision that can be handled. In

this paper, we propose a classification and regression technique to handle imperfect information. We incorporate the handling of imperfect information

into both the learning phase, by building the model that represents the situation under examination, and the inference phase, by using such a model.

The model obtained is global and is described by a Gaussian mixture. To show the efficiency of the proposed technique, we perform a comparative study

with a broad baseline of techniques available in literature tested with several data sets.

|

| |

| | | |

|

|

|

|